Tech Journal The Human Factor: Best Practices for Responsible AI

By Ken Seier / 21 Sep 2020 / Topics: Data and AI Analytics Digital transformation Featured

By Ken Seier / 21 Sep 2020 / Topics: Data and AI Analytics Digital transformation Featured

Over the past few years, the democratization of Artificial Intelligence (AI) has fundamentally begun to alter the way we identify and resolve everyday challenges — from conversational agents for customer service to advanced computer vision models designed to help detect early signs of disease.

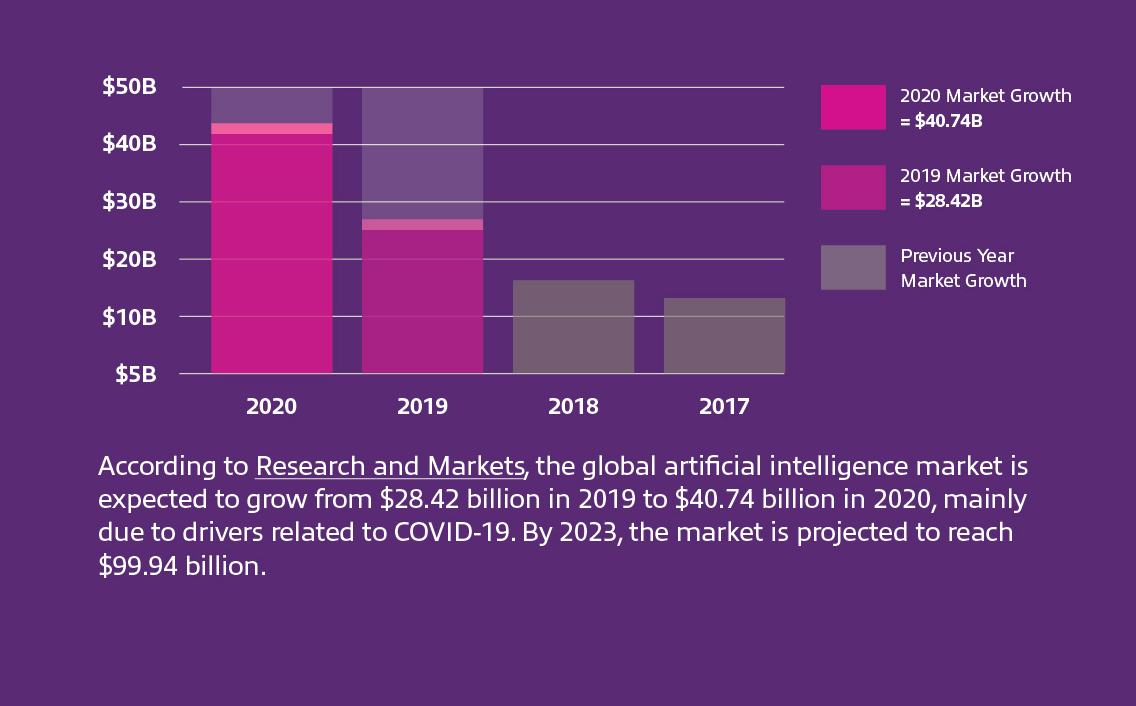

In 2020, the ongoing need to protect public health while maintaining a critical edge in an uncertain market has only accelerated investments in AI. Solutions such as facial recognition for contactless entry, computer vision for mask detection and apps for contact tracing are enabling businesses to reopen to the public more safely and strategically. But unlike a traditional technology implementation, there are some additional social, legal and ethical challenges to consider when developing and implementing these technologies.

So, how do we design innovative, intelligent systems that solve complex human challenges without sacrificing core values like privacy, security and human empathy?

It starts with a commitment from everyone involved to ensure even the most basic AI models are developed and managed responsibly.

While the potential value of modern AI solutions can’t be understated, artificial intelligence is still in its infancy as a technology solution (think somewhere along the lines of the automobile back in the 1890s). As a result, there remain some fundamental public misconceptions around what AI is and what it’s capable of. Gaining a better understanding of the capabilities and limitations of this technology is key to informing responsible, effective decisions.

The term “AI” is often associated with a broad human-like intelligence capable of making detailed inferences and connections independently. This is known as general or “strong” AI — and at this point, it’s confined to the realms of science fiction. By comparison, the capabilities of modern machine learning models are fundamentally limited, known as narrow or “weak” AI. These models are pre-programmed to accomplish very specific tasks but are incapable of any broader learning.

Cameras used to detect masks or scan temperatures, for example, might leverage some form of facial identification to recognize the presence of a human face. But unless explicitly trained to do so, a computer vision system won’t have the ability to identify or store the distinct facial features of any individual.

Even within a limited scope of design, AI isn’t infallible. While total accuracy is always the goal, systems are only as good as their training. Even the most carefully programed models are fundamentally brittle and can be thrown off by anomalies or outliers. Algorithms may also grow less accurate over time as circumstances or data inputs change, so solutions need to be routinely re-evaluated to make sure they’re continuing to perform as expected.

The effectiveness of the model, the amount of personal information gathered, when and where data is stored, and how often the system is tuned depends on the organization’s decision-makers and developers.

This is where the principles of responsible AI come into play.

Responsible AI refers to a general set of best practices, frameworks and tools for promoting transparency, accountability and the ethical use of AI technologies.

All major AI providers use a similar framework to guide their decision-making — and there’s a wealth of scholarly research on the topic — but in general, guidelines focus on a few core concepts.

Responsibly leveraged, the potential benefits of AI are near limitless, from improving business processes to fighting disease.

Regardless of application, everyone involved in the decision-making process has a role to play in developing something that contributes to the business and humanity as a whole in a positive way. When in doubt, take a strategic pause to step back and consider the broader implications of the questions your team faces and err on the side of caution.

Ultimately, no matter how advanced the technology, we have to be humans first.

Data and AI Analytics Digital transformation Data and AI Tech Journal View all focus areas